Guide for Selecting an Effective Crime Prevention Program

Guide for Selecting an Effective Crime Prevention Program PDF Version (471 KB)

Guide for Selecting an Effective Crime Prevention Program PDF Version (471 KB)

© Her Majesty the Queen in Right of Canada, 2015

Cat. No.: PS114-15/2015E-PDF

ISBN Number: 978-1-100-25612-2

Table of contents

- Introduction

- The Evidence-Based Approach in Crime Prevention

- Step 1. Assessing the Local Situation

- Step 2. Selecting an Effective Crime Prevention Program

- Step 3. Implementing an Effective Crime Prevention Program

- Step 4. Building Program Sustainability

- Conclusion

- Appendix 1. Classification Systems of Evidence-Based Crime Prevention Programs

- Appendix 2. Cost-Effectiveness and Cost-Benefit Analysis

- Appendix 3. Program Implementation

- Appendix 4. Program Sustainability

- References

Abstract

For several years, the evidence-based approach has been used in the crime prevention domain to support programs that have demonstrated their effectiveness in reducing crime and improving community safety. The evidence-based approach, which relates to program effectiveness, is based on high scientific standards from results on program effectiveness that come from rigorous evaluation studies. Registries/databases, available to the public, widely disseminate evidence-based programs. The notion of “evidence” is operationalized (i.e., to materialize) through a continuum of program effectiveness: this is why there are different categories of programs, from “model programs”, to “promising programs”, to “ineffective programs”. The evidence-based approach thus needs to be well understood by local authorities and applied appropriately through the programs and practices that will be implemented in local communities.

From this conceptualization multiple questions arise, some of which go unaddressed. For example, among all crime prevention programs supported by evidence, how do you select the most appropriate program that will meet the demands of funders, the needs of the population, and the organizational capacities of the lead organization? Where can information on programs and practices supported by evidence be found? How do we ensure that effective strategies and potential challenges will be considered?

This report has been developed to provide some answers to questions on the use of evidence-based programs by practitioners and policy makers. Evidence-based crime prevention programs can get better results than traditional programs, but to achieve the expected results, it is necessary that the right program is selected for the right clientele and that it is implemented using effective strategies. This report, without being exhaustive, provides practical guidance to help individuals better understand the evidence-based approach in crime prevention, proposes a step-by-step framework to guide them during program selection and implementation, and also suggests key elements for sustainability. This guide is an updated and more detailed version of a publication previously posted on Public Safety Canada's website (Guide to Select Promising and Model Crime Prevention Programs; 2012).

Author's Note

The views expressed are those of the authors and do not necessarily reflect those of Public Safety Canada. Correspondence concerning this report should be addressed to:

Research Division, Public Safety Canada

269 Laurier Avenue West

Ottawa, Ontario, K1A 0P8

email: PS.CSCCBResearch-RechercheSSCRC.SP@ps-sp.gc.ca

Introduction

Crime prevention, with its vast scope, can be defined as the proactive strategies and measures that seek to intervene and modify risk factors among individuals, families, and/or in the environment in order to reduce the propensity to offend or re-offend, and/or the likelihood that criminal acts will be committedNote 1. Whether the focus is preventing youth violence, youth gang involvement or recidivism, the selection of the most appropriate crime prevention program(s) and/or practice(s)Note 2 is important and this decision should be based on certain key factors. There are many prevention and intervention options available and it can sometimes be difficult to determine what should be done. As there are literally hundreds of different crime prevention programs available (both in number and in type), it is important to take stock of and to identify key elements to assist in the selection of the most appropriate program.

The decision-making process to select a crime prevention program is complex, requires a significant investment of time and commitment and relies on various factors, including: the local context of crime and safety issues; the organizational resources and capacities of the lead organization; and the suitability and alignment of the program (for example, with the targeted clientele, risk factors and organizational capacities). For example, to identify local safety issues, communities will classify all possible approaches and strategies but since not everything can be done, a limited number of specific program(s)/intervention(s)/initiative(s) will be put forward to advance the agenda on crime prevention. But what program(s) should be chosen and based on what criteria? In addition, the question of program continuity after the initial funding (or in other words, program sustainability) should be addressed in the early stages and should be considered as another important factor in the program's decision-making process. In other words, potential programs for implementation should be compared in order to identify those with the highest probability of continuation. Indeed, it seems logical to choose a program whose continuity can be more easily assured.

While it is increasingly recognized that projects based on rigorous evidenceNote 3 obtain better results, there has been relatively little investigation into the local context in which the process of selecting a program occurs and/or on the criteria that should influence or orient this decision. Until now, and especially in Canada, no “comprehensive road map” has provided clear guidance on using the evidence-based approach both by policy makers as well as by local communities. Research and evaluation studies have largely focused on presenting outcomes and impacts of programs (e.g., in terms of crime reduction) and only a few have investigated the process of choosing a program and explained the rationale for selection.

This report has been written to fill these gaps and to better understand the decision-making process. Although not exhaustive, it presents a step-by-step framework and provides key considerations and questions designed to help stakeholders and communities make the most informed decisions possible when selecting a program for implementation in their community.

Who is this Document For?

As a tool to help future local program implementers choose the most appropriate program(s), this document is intended for anyone interested in the process of selecting an evidence-based program in the field of crime prevention, or in a related field, and in learning more about best practices and strategies for successful program selection, implementation and sustainability.

Individuals who may benefit from this guide include members of an organization working collaboratively to identify, select and implement a program that meets the needs of their local population, as well as funders, and the community.

How to Use this Document

This document proposes a step-by-step framework and its central objective is to facilitate and guide the selection of an evidence-based program that best matches local needs as well as to provide advice for program implementation and sustainability. As its title suggests, this document should be used as a guide and reference document from the early stages of the decision making process.

The Evidence-Based Approach in Crime Prevention

An evidence-based approach refers to programs and practices that are proven to be effective through strong research and evaluation methodology and have produced consistently positive patterns of resultsNote 4. Identifying “what works” and applying the evidence-based knowledge to program development is critically important to ensure the use of best practices in several fields: crime prevention and corrections, health and mental health services, and education.

The Evidence-Based Approach to Crime Prevention: What is it?

The evidence-based crime prevention approach attempts to ensure that the best available evidence is considered in any decision to implement a program designed to prevent crime. An evidence-based approach requires that the results of rigorous evaluations be rationally integrated into decisions made by policymakers and practitioners on interventions to recommend. (Welsh, 2007)

It is well demonstrated and recognized that the more rigorous a study's evaluation design, (e.g., randomized controlled trials, quasi-experimental designs), the more compelling the research evidence. During the past years, the advancement of the evidence-based approach has been facilitated by methodological developments (for example, with systematic reviews and meta-analyses) and by technological advancements in the form of public evidence-based registries/databases such as Blueprints for Healthy Youth Development and Crime Solutions. Appendix 1 presents examples of these registries as well as the definitions used to describe the different types of programs (e.g., model, promising and ineffective programs)Note 5.

Applying an evidence-based approach also means applying well-supported principles. For example, when working with offenders and youth at-risk of violence and delinquency, using validated screening and assessment tools to determine risks and needsNote 6, applying the risk-need-responsivity framework when matching participants to interventions (dosage and intensity of individualized interventions), and obtaining the buy-in of multiple agencies are some examples of evidence-based principlesNote 7.

An evidence-based program is not an “all or nothing” static notion but rather reflects a concept that operates on a continuum of effectivenessNote 8. This continuum of effectiveness is a tool to determine where a crime prevention program falls on the evidence-based continuum. At one end of the continuum, there are the best programs which were rigorously evaluated and achieved statistically significant results. On the other end of the continuum, there are ineffective programs which were also rigorously evaluated but for which the results demonstrated that they had no effects or, more problematically, had harmful effects for the participants. Finally, along the continuum (between the best and ineffective programs) are promising programs that have achieved encouraging results but for which other replications and strong evaluation studies are still needed.

In order to judge the degree of effectiveness, a strong evaluation design is needed and results must be supported by evidence. Knowing what doesn't work is just as important as knowing what works best. Supporting ineffective programs costs billions of dollars to taxpayers and some programs may cause harmful effects for the participants. There is an enormous cost (social and financial) of pursuing and supporting programs and policies that are not rationally basedNote 9. Evidence-based policymaking should use the best available research and information on program results to guide decisions at all stages of the policy processNote 10.

In Canada, the fact remains that a large number of crime prevention programs are not supported by the evidence-based approach. An unpublished preliminary inventory of crime prevention programs in CanadaNote 11 indicates that nearly 60%Note 12 of the sampled programs have never been evaluated in the Canadian context. In other words, no results from evaluation studies support these programs, and in the absence of evidence, it is impossible to know the effectiveness of these programs (e.g., where do these programs fall on the continuum of effectiveness). An outcome evaluation helps to answer questions such as “is this program working?” and aims to find evidence of changes in clients/participants' behaviours and if changes result from their experience in the programNote 13. Without these studies and strong performance monitoring systemsNote 14 in place, the development of knowledge regarding effective programs in crime prevention in Canada is hindered.

Cost-Effectiveness of the Evidence-Based Approach

The evidence-based approach is also associated with cost-effectiveness and cost-saving perspectives. Regardless of the area of expertise, it makes the most sense to invest in a service or program that has already demonstrated its effectiveness. The scientific evidence on what programs work in addressing risk factors in at-risk populations should be used to inform the development of projects as well as in funding decisions.

Investments in proven, tested, research-based programs not only lead to improved outcomes but are also associated with significant cost savings in taxpayer dollars. Appendix 2 provides additional detail about cost-effectiveness and cost-benefit analysis. One of the greatest social benefits for implementing evidence-based programs for at-risk youth is the cost-savings provided to taxpayersNote 15. Investing in evidence-based programs is the key to reducing victimization and increasing public safety while simultaneously managing correction costsNote 16. More specifically, cost-benefit analysis provides a tool for analysts and policymakers to evaluate crime prevention and criminal justice programs from an economic perspective in order to guide decisions regarding whether to modify, expand or terminate projectsNote 17.

In the United States, the Washington State Institute for Public Policy (WSIPP)Note 18 has provided credible evidence that for each dollar spent on evidenced-based prevention programs, more than a dollar's worth of benefits will be generated (i.e., the benefits exceed the costs). This organization has undertaken numerous cost-benefit analyses and systematic comparisons of crime prevention programs to inform policy decisions.

The Step-by-Step Framework

Overall, there are multiple reasons to support the use of the evidence-based approach. Below are only some examplesNote 19 that indicate why governmental authorities, at all jurisdiction levels, should pursue an evidence-based agenda:

- Evidence-based programs are based on rigorous studies, and are proven to be effective and produce positive results.

- If implemented properly, with technical assistance and strict adherence, these programs can result in significant improvements and can significantly reduce crime. In addition, evidence-based programs are sound investments.

- These programs have proven to be successful in even the most challenging cases, providing meaningful change for thousands of at-risk and vulnerable families and communities.

In order to provide guidance for local communities to properly select the most appropriate evidence-based program, the proposed step-by-step framework is based on the following four stepsNote 20:

- Step 1. Assessing the Local Situation: What are the crime and safety issues and links with other issues in the community? What segment(s) of the population needs to be reached? What are the main characteristics of this population? What are the local resources and initiatives in crime prevention? This component addresses the local context and suggests the importance of conducting a local needs assessment.

- Step 2. Selecting an Effective Crime Prevention Program: What program works for the identified priorities and issues? Which program will best match the characteristics and needs of the identified population? This component addresses responses to the local assessment and identifies current best practices and programs in “What Works” clearinghousesNote 21. At this step, the final decision concerning the program to be implemented will be made.

- Step 3. Implementing an Effective Crime Prevention Program: What are the factors for successful implementation? This component addresses the implementation drivers and conditions that may positively affect the program implementation process. Not all programs lead to successful implementation in the same way. This component takes into account the lessons learned from other implementation experiences and is based on the key elements of successful replication.

- Step 4. Building Program Sustainability: What elements encourage the continuity of a program? This component addresses the sustainability factors and keys to success. Not all programs, even those that are successful, have the same probability of being incorporated into existing systems or receiving sustainable long-term funding. During the selection process, it is important to take these elements into consideration. In fact, even if this step appears to be a logical continuation once a program ends, sustainability must be assessed early in the program selection process. Moreover, even if nothing is guaranteed, it makes more sense to choose a program whose activities and services could be maintained by the lead organization after the initial funding ends.

Figure 1. Four Steps of the Evidence-Based Crime Prevention Approach

Image Description

Figure 1, a basic radial chart with four boxes radiating from a central box, shows the four steps in the evidence-based crime prevention approach to select the most appropriate program.

Central box: Evidence-based crime prevention approach

Step 1 (top box): Assessing the local situation

Step 2 (right box): Selecting an effective crime prevention program

Step 3 (bottom box): Implementing an effective crime prevention program

Step 4 (left box): Building program sustainability

While greater effort may be required to implement an evidence-based program, the benefits for the participants as well as for the local communities are also greater. Well-planned programs can prevent crime and victimization, and promote community safety. But in order to be effective and generate positive outcomes, it is important to select the most appropriate evidence-based program that will best fit the needs of the local communities and populations and will be aligned with organizational capacities.

Step 1. Assessing the Local Situation

Analysis of the Local Situation: What Is It?

The portrait of the local situation provides an overview of the issues in the community, emerging and current risk behaviours or problem situations for specific populations, risk factors and the context in which they occur. Even more important is the fact that this assessment should indicate what appears to influence people to make the decision to adopt risky behaviours. The portrait must also provide an inventory of resources and programs in place in the community.

The first step is the development of a local portrait/diagnostic, also called an environmental scan or a strategic needs assessment, using a variety of data collection methodsNote 22. This portrait should provide an overview of the local reality, the nature and trends of existing and emerging crime and safety issues, the characteristics of the populations involved, the contributing factors, the services already in place, and the resources available in the community.

The importance of the local analysis cannot be underestimated. A lack of understanding of the local crime and safety issues and context can result in the selection of the wrong type of prevention program for implementation. If the nature, characteristics and extent of the issues are not properly defined and established, the selected program might not target the most appropriate client population, and could lead to the selection of inappropriate interventions. Not only would the program then fail to generate the expected results, it could have counter-productive outcomes. Research on correctional interventions in communities has demonstrated that intervening with people who do not need support (for example, low-risk offenders) can have negative repercussionsNote 23.

According to a research project on the analysis of process evaluations from a sample of crime prevention projectsNote 24, it appears that 23% of the projects carried out a local needs assessment before the implementation of their project, 20% did not, and for 58% of the projects, this information was not availableNote 25.

Conducting an analysis of the local community context is critical. There are several sources of information that can be consulted (such as police, health and social services, educational system, and socio-economic reports and data) and it is important that the information and data used are up-to-date, as objective as possible, and based on valid data collection methods.

Analysis of the Local Situation: Examples of Questions

- What is the nature and extent of crime and safety issues?

- Are there times and/or specific areas where the problematic behaviours occur more often?

- What are the characteristics of the population associated with the problem?

- What are the risk factors (proximal and distal)?

- What services (direct or related) are currently available to address the problem?

Once the main priority issue(s) has been identified, gathering information to develop a full understanding of the nature and characteristics of the people and circumstances involved is the next step. Knowledge of characteristics such as the age, gender and ethnicity of the individuals involved in the situation is critical to program selection. For example, knowing key characteristics of youth involved in violent behaviour would help to select an appropriate program specific for them. Similarly, knowing where youth spend their time, when violent behaviour takes place, and any other factors related to incidences of violence will help to select a program that has the best chance of addressing crime and violence in this specific context.

Before making a decision on the program, it is also important to know what programs and services are currently available in the community. An inventory of existing resources is valuable from a number of perspectives. It can help to identify gaps in services, reduce duplication of work, and help to identify potential partners for a new initiative in the community. Every crime prevention program requires specific resources and it is only with a thorough understanding of what is currently available that an informed decision can be made about what programs are most relevant in a given local community context.

Some key questions to help guide the development of a local portrait/diagnostic are provided in the box above. Comparing the information collected and the program chosen ensures that the program is a good fit for the selected group and the community, and provides the rationale to support the implementation of the selected program.

Step 2. Selecting an Effective Crime Prevention Program

Having the final report on the analysis of the local situation in hand, the second step is to identify and select potential program(s) that will be implemented to reduce/prevent situations/problem behaviours. When selecting a program, the evidence-based crime prevention approach ensures that the best available evidence is considered. For example, it is at this stage that the registries on effective programs are very useful to consult to gather information on the effectiveness of programs and to better understand their operational requirements. Since there are literally hundreds of programs in crime prevention, the box to the right provides key questions to ask to determine the type of research and evidence that exist to support and demonstrate the effectiveness of selected program(s).

Selecting an Effective Crime Prevention Program: Examples of Questions

- What evidence and research are available to support the program?

- Is the program rated in any registries/databases?

- What is the level of effectiveness?

- How many impact evaluation studies have been conducted?

- Is cost-effectiveness information available?

- Is the program ready for dissemination?

In addition to ensuring the level of effectiveness of potential program(s) to be implemented, three other variables are often overlooked, yet should guide decision making during the selection process:

- Program suitability and alignment;

- Organizational resources and capacities; and

- Level of program adaptation.

Program Suitability and Alignment

The alignment of the selected program with the capacities of the lead organization, the main risk factors, and participant characteristics are the most important variables affecting the level of suitabilityNote 26.

The more the program corresponds to the values and objectives of the lead organization; the more the program is aligned with the risk factors identified in the needs assessment; and the more appropriate the program is to address the characteristics of the selected group, the higher the level of program suitability. The higher the level of suitability, the greater the chances that the program is the best fit. The analysis of the degree of suitability of potential program(s) is a significant variable to consider when making a final decision. For example, even if the program is classified among the best, if its suitability is low, difficulties in implementation are possible which will negatively affect the achievement of results. The following items related to the suitability and alignment of the program should influence the final choice of the program to be selected.

Suitability and Alignment with the Lead Organization

Suitability and Alignment of the Program: Examples of Questions

- How do the goals and objectives of the program reflect those of the organization?

- How does the program complement other programs and services provided by the organization and by others in the community?

- Are the program duration and intensity appropriate for the potential participants?

- Do the potential participants have the time to fully participate in the program?

- Are the location and time of the program activities appropriate for the potential participants?

- Does the program need to be adapted in order to meet some specific characteristics of the population?

Although it seems obvious, the selected program's alignment with the mission of the lead organization is often neglectedNote 27. The more the program is in line with the organization's philosophy and organizational values, the better the chances that the program will be accepted by staff and others in the community. Similarly, the more the program is targeted to reach a clientele already known to the organization, the better the chances are that the right clientele will be reached.

Another factor to consider is how the program will complement other programs already provided by the lead organization, or by other organizations in the community. New programs implemented in a community should address gaps and provide services to reach needs that are not being met. This will help in the development of a comprehensive approach, which, in the long term, could lead to interventions that are more effective and enduring.

Suitability and Alignment with the Risk Factors

The second alignment component looks at how well a program addresses the level and complexity of the risk factors of the intended participants. A key consideration is the duration and intensity of the program. Reducing the impact of certain risk factors (e.g., substance abuse or impulsivity) requires interventions with a duration long enough to change participant behaviours. Understanding the main risk factors present and what types of interventions are suitable to address them are important elements for the selection of an appropriate program.

Suitability and Alignment with the Group of Participants

The last component related to suitability and alignment is the fit with the characteristics of the selected population/group. Every program is developed and designed to work in certain settings and with specific populations; interventions working with youth in schools will be different than those working with street youth. It is important to increase the likelihood that those targeted will participate for the entire length of the program as required to achieve the expected changes. In other words, the degree of effort required to fully participate in the program for the required amount of time must correspond to the effort that the selected group is able to put in. For example, what are the chances that vulnerable youth will participate in a long-term program with regular meetings? It is also important to ask whether the time and location of meetings suit the appropriate audience.

Organizational Resources and Capacities

Organizational resources and capacities represent the other type of factors that must be considered when selecting an evidence-based program.

Organizational Resources and Capacities: Examples of Questions

- Are there staff within the organization capable of implementing this program?

- What qualifications are recommended or required?

- How many employees are needed? What is the recommended ratio of staff to participants in the program?

- Can the program be implemented in the time allotted?

- Is the infrastructure to collect and monitor data in place and adequate?

- What are the costs associated with the implementation of the program, training, and purchase of the documents/license?

The analysis of organizational resources and capacities is too often a missing piece. Evidence-based programs vary in their complexity and in the level and type of effort and resources they require for implementation. Not all programs can be implemented everywhere, and it is important to consider organizational resources and capacities.

Implementing an evidence-based program requires investments with respect to money, material, time, and human resources. Even if the program has a high degree of suitability and alignment, if the lead organization and its partners lack the necessary resources and capacities, the chances of obtaining the expected results are limited.

Various organizational factors facilitate the implementation of a high-quality program, such as:

- Operational capacity – e.g., qualified personnel, good staff retention, training and supervision, a monitoring system in place;

- Financial capacity – e.g., appropriate financial controls, qualified personnel to monitor and report financial information;

- Previous experience in the implementation of similar programs and with the same clientele; and

- Sound and effective partnerships and networks in the community.

Degree of Program Adaptation

The degree of program adaptation is a major factor to consider when selecting a program. Some programs are designed to be more flexible and can be adapted without affecting key components or compromising the expected results. Conversely, other programs are more rigid and should not be subject to adaptations, as these changes are likely to affect the achievement of results. Depending on the context in which the program will be implemented, the opportunities to tailor the program to suit the characteristics or special circumstances must be considered during the selection process.

Beyond the degree of flexibility of the program, studies have also shown that some adaptations are acceptable, while others are risky. Table 1 below presents examples of these adaptations.

Before any adaptations are made, it is essential to understand the program's rationale as not to lead to unexpected or involuntary results. Failure to respect the core program components could lead to what is referred to as “program drift” and the expected results of the evidence-based program may not be achieved. Modifying a program, even when program adaptations are needed, requires additional resources (personnel, time, and funds) and additional planning and evaluation to monitor the adaptations and evaluate the outcomes.

| Acceptable Adaptations | Unacceptable or 'Risky' Adaptations |

|---|---|

|

|

| Adapted from O'Connor et al. (2007) | |

For example, to adapt a given program to better reflect the culture and values of the selected population, precautions need to be taken. Except for a few culturally-sensitive programs, the majority of evaluated preventive interventions have not been developed based on ethno-cultural dimensions. Additionally, when culturally appropriate programs have been evaluated, few used rigorous evaluation standards. In many cases, if a community wishes to add cultural modifications to the program, adaptations must be introduced with the consent, and under the supervision of, the program developers. Adaptations should always be indicated and monitored through performance data and evaluations.

Step 3. Implementing an Effective Crime Prevention Program

By this step, the selection of the program should be almost complete and emphasis should now be placed on how the selected program will be implemented by the organization.

Program Implementation: What is it?

Program implementation is not a unique, linear event but rather a dynamic, iterative process which can be spread out over a period of two to four years. Implementing a program is associated with structural and procedural changes: programs are not plug and play; a number of changes will take place in the organization and various factors will influence, positively or negatively, the implementation process. (Fixsen et al., 2005)

It has been well established that the quality of the implementation of crime prevention programs affects the achievement of the expected resultsNote 28. Implementation science has shown that negative or mixed results from a program's impact evaluation may be linked to a poor quality implementation and do not necessarily mean that its framework is not workingNote 29. For example, a promising program that is implemented effectively has a greater chance of producing positive results than a model program where implementation is constrained by multiple gaps and limitations. In other words, even the most effectively designed interventions can produce poor results when improperly implementedNote 30. Additionally, another example to demonstrate the importance of implementation: a program evaluated as having neutral effects can be replicated under different conditions and can obtain positive results.

When thinking about implementation, two sets of activities and outcomes must be distinguished: the program-level activities from which the program outcomes emerge and the implementation-level activities from which the implementation outcomes emerge. Implementation outcomes need to be identified and assessed, distinct from the program outcomes. In other words, when programs are unsuccessful, it is important to ask if failure is due to services or treatments that did not work (program activity failure) or if it is due to services or treatments that were not implemented properly (implementation failure)Note 31.

Meta-analyses examining the implementation process have shown the requirement to adopt a multi-level approach in order to take into consideration all of the factors that can positively or negatively influence the program implementation (program characteristics, practitioners, and communities; organizational capacity; training and technical assistance are some examples of factors that can affect the implementation process)Note 32. Appendix 3 presents several factors that should be considered when implementing a program as well as implementation drivers that should be in placeNote 33.

Program Implementation: Examples of Questions

- How will participants be reached and engaged in the program?

- Does a referral form need to be developed and used? By whom? Based on what eligibility criteria?

- Is management from the lead organization engaged and flexible?

- Is there a mandatory risk assessment tool to use with participants? Are specific qualifications required to use it?

- Do incentive strategies need to be developed? What types? How much money should be allocated (budget planning)?

- Which partners must be involved? Are protocols/memorandums of understanding already in place? Are the roles and responsibilities of each partner clearly articulated?

Not all programs have the same degree of complexity, and their implementation will therefore be affected. Implementation conditions must be considered as a key element in whether or not results are achieved. Effective program implementation strategies increase the likelihood of success and lead to better results for participants. Moreover, a dynamic, committed and open context that is amenable to change will facilitate a program's integration.

In order to build a body of Canadian knowledge on effective crime prevention programs and efficient implementation strategies, strong process and impact evaluations should be conducted together in a more systematic manner. In addition, to ensure adequate implementation, strong monitoring systems should be established. This monitoring should ensure that evidence-based programs are carried out with fidelity to their design and incorporate the elements that are critical to their effectiveness (for example, having training and technical assistance and conducting regular meetings on data performance are some examples of key strategies to support effective program implementationNote 34).

Step 4. Building Program Sustainability

Program Sustainability: What is it?

There is no standard approach for defining or conceptualizing sustainability. In some situations, it is the continuity of a program or service over time – the ability to carry on program services through funding and resource shifts or losses. In others, it is about institutionalizing services; creating a legacy; continuing organizational ideals, principles, and beliefs; upholding existing relationships; and/or maintaining outcomes. (US Department of Health and Human Services, Office of Adolescent Health, 2014a,b)

Sustainability is about ensuring that organizations implement strategies that contribute to long-term success. While it may seem surprising, sustainability is an aspect that needs to be considered when selecting a program for implementation.

Not all successful programs have the same likelihood of being incorporated into or combined with existing systems and/or of receiving sustainable funding. When a program is selected, consideration needs to be given to whether it could be maintained and included in the organization's structure once the initial funding ends. Some programs may fit into organizational structures more easily than others; this is why the alignment and suitability of the program with the organizational structure is an important factor that should not be minimized.

Identifying strategies for continuing to deliver effective programs and services before funding cycles draw to a close is often cited as a significant challenge. The cost of the program, while not the only variable, is an important factor since it seems that programs requiring a major financial investment are more likely to be discontinued after the funding runs out. But sustainability is not only a matter of funding; it also entails creating and maintaining the momentum by reorganizing and optimizing resources. Sustainability is leveraging partnerships and resources to continue programs, services, and/or strategic activities.

Sustainability can mean different things in different contexts and can be reflected in numerous waysNote 35:

- The institutionalization of all or part of a program;

- Momentum that mobilizes and leads to a reorganization (for example, in services provided);

- The continuation of all or some components of the project through an ongoing funding arrangement;

- The transformation of policies, governance structures, fiscal arrangements and service practices in place;

- Adapting to constant changes in technology, policies, and funding streams; and

- Sharing positive outcomes to encourage local buy-in and provide high-quality services.

Building a Sustainability Plan

Each organization should develop its own sustainability plan to meet their vision for sustainability and to respond to the unique needs of their organization and programs. There is no “one size fits all” sustainability strategy.

Toward Program Sustainability: Examples of Questions

- Must all or certain project activities be continued? Which ones? Why?

- What does the institutionalization of this program entail?

- What resources are available and what resources are needed to maintain the program?

- Who are the stakeholders that we can count on to enable program sustainability?

- Could resources be pooled with some partners?

- What mix of potential solutions is needed to maintain the level of resources required to carry on the activities?

- What potential challenges can already be envisioned, and what are potential solutions?

- Are there any precedents that could provide inspiration for sustaining this program?

- Does the sustainability plan meet the needs of the organization?

Sustainability planning should be as tangible as implementation planning. Similar to implementing programs, planning for sustainability is not a single event or a linear process. Rather, it is a continuous and dynamic process where many activities will occur simultaneouslyNote 36. Sustainability planning must be flexible and specifically tailored to local needs and the environment within which each organization operates. Appendix 4 presents some of the key factors that influence whether a service, program, or activities will be sustained over time. Finally, one of the most important parts of the process of planning for sustainability is to keep the sustainability plan active. The plan should be seen as a living document, and should not be put aside once completed. The plan should be continually reviewed and revised to reflect the changing conditions in which the program operates.

Conclusion

Contemporary crime prevention programs are based on a scientific approach from which tangible and quantifiable results exist. Evidence is available to demonstrate effectiveness in preventing and reducing crime. However, the evidence-based approach also requires that the selection and implementation of the program be directed by key program elements. The first step is to ensure that the selected program is aligned with the needs identified in the local assessment and with the capacities of the lead organization. These two aspects seem obvious but are often not well established during the selection process. Neglecting these variables can affect the implementation and consequently, can affect the final results to be achieved by the program.

By questioning the suitability and alignment of a program with: the realities and needs of the potential participants, objectives and resources of the lead organization; organizational and social context in which the new prevention program is to be integrated; and the level at which the program can be adapted to address specific situations and characteristics, are all factors that should influence and guide the decision-making process for the final selection of the right program to be implemented. Understanding how to identify an evidence-based crime prevention program, how to ensure it's a good fit with the specific population and the organization, how to implement it, and how to sustain it, are critical elements for practitioners as well as for policymakers.

In the crime prevention domain, a wide variety of resources exist, such as registries/databases, guides, checklists, etc. that provide user-friendly tools and promote the use of prevention programs that have been rigorously tested in local communities. In Canada, comprehensive tools that are addressed to practitioners and policymakers that explain the evidence-based approach are limited; this is one reason why this guide was developed. However, recognizing the ever-changing nature of the field, this report should not be considered as a static document.

The way in which the program is implemented has a significant impact on how well a program is able, or not able, to achieve the expected results. The importance of the implementation process in order to achieve program outcomes is increasingly recognized and the attention of experts is now more focused on “how” and “the context” in which effective programs are implemented. Mixed results are not automatically due to a poorly designed program but can be caused by implementation challenges. To ensure that implementation follows a proper path, data on specific implementation indicators should be collected and monitored on a frequent basis.

In all areas of social science, including in the prevention of crime and delinquency, the evidence-based approach requires development and accumulation of new knowledge as well as the transfer and application of this knowledge. Without having diffusion mechanisms and the promotion of effective programs to practitioners and policymakers, these programs would not be integrated into public policies or even replicated in local communities. While it is central to keep updated databases on evidence-based programs and to conduct rigorous outcome evaluations, it is also vital to disseminate and especially promote the implementation of these evidence-based programs into real contexts. This is needed in order to address problematic situations and even to prevent the future development or aggravation of certain risk behaviours. Gathering evidence is important, but it is what is done with that evidence that matters most.

Appendix 1. Classification Systems of Evidence-Based Crime Prevention Programs

This appendix presents the most recognized classification systems of evidence-based crime prevention/delinquency programsNote 37. Table 2 provides an overview of the rating guidelines employed by each of the classification systems of evidence-based crime prevention programs discussed below.

Blueprints for Healthy Youth Development

Website: http://www.colorado.edu/cspv/blueprints/

The Blueprints for Healthy Youth Development is a research project within the Center for the Study and Prevention of Violence, at the University of Colorado Boulder. The Blueprints mission is to identify evidence-based prevention and intervention programs that are effective in reducing antisocial behaviour and promoting a healthy course of youth development.

Each Blueprints program has been reviewed by an independent panel of evaluation experts and determined to meet a clear set of scientific standards. Programs meeting this standard have demonstrated at least some effectiveness for changing targeted behaviour and developmental outcomes.

Crime Solutions and Model Programs Guide

Crime Solutions, Office of Justice Programs

Website: http://www.crimesolutions.gov/default.aspx

Model Programs Guide, Office of Juvenile Justice and Delinquency Prevention

Website: http://www.ojjdp.gov/mpg/

The Office of Justice Programs' CrimeSolutions.gov and the Office of Juvenile Justice and Delinquency Prevention's (OJJDP's) Model Programs Guide (MPG) share a common database and use rigorous research to inform practitioners and policy makers about what works in criminal justice, juvenile justice, and crime victim services.

The two sites provide research on the effectiveness of programs and practices as reviewed and rated by study reviewers and provide easily understandable ratings based on the evidence that indicates whether a program or practice achieves its goals.

Coalition for Evidence-Based Policy – Top Tier Evidence Initiative

Website: http://coalition4evidence.org/

The Coalition for Evidence-Based Policy seeks to increase government effectiveness through the use of rigorous evidence about what works. Rigorous studies have identified a few highly-effective program models and strategies. The Coalition advocates many types of research to identify the most promising social interventions.

The Coalition for Evidence-Based Policy also administers the Top Tier Evidence Initiative. The goal of this initiative is to identify social programs that are backed by strong evidence of important impacts on people's lives. Through a systematic review effort launched in 2008, the initiative's expert panel identifies interventions meeting “Top Tier” or “Near Top Tier” classification.

Substance Abuse and Mental Health Services Administration (SAMHSA) National Registry of Evidence-Based Programs and Practices (NREPP)

Website: http://www.nrepp.samhsa.gov/Index.aspx

The National Registry of Evidence-based Programs and Practices (NREPP) is a database of more than 330 mental health and substance abuse interventions. All interventions in the registry have met NREPP's minimum requirements for review and have been independently assessed and rated for quality of research and readiness for dissemination.

The purpose of NREPP is to help practitioners learn more about available evidence-based programs and practices and determine which of these may best meet the needs. NREPP is one way that SAMHSA is working to improve access to information on evaluated interventions and reduce the lag time between the creation of scientific knowledge and its practical application in the field.

| Classification Systems | Category of Effectiveness | ||

|---|---|---|---|

Blueprints for Healthy Youth Development |

Model A minimum of (a) two high quality randomized control trials or (b) one high quality randomized control trial plus one high quality quasi-experimental evaluation. Positive intervention impact is sustained for a minimum of 12 months after the program intervention ends. |

Promising The evaluation trials produce valid and reliable findings. This requires a minimum of (a) one high quality randomized control trial or (b) two high quality quasi-experimental evaluations. |

|

Crime Solutions and Model Program Guide |

Effective Programs have strong evidence indicating they achieved their intended outcomes when implemented with fidelity. |

Promising Programs have some evidence indicating they achieved their intended outcomes. Additional research is recommended. |

No Effect Programs have strong evidence indicating that they did not achieve their intended outcomes when implemented with fidelity. |

Coalition for Evidence-Based Policy / Top Tier Evidence Initiative |

Top Tier Interventions shown in well-designed and implemented randomized controlled trials, preferably conducted in typical community settings, to produce sizable, sustained benefits to participants and/or society. |

Near Top Tier Interventions shown to meet almost all elements of the Top Tier standard, and which only need one additional step to qualify. This category includes, for example, interventions that meet all elements of the standard in a single site, and just need a replication trial to confirm the initial findings and establish that they generalize to other sites. |

|

National Registry of Evidence-Based Programs and Practices |

Reviewers use a scale of 0.0 to 4.0, with 4.0 being the highest rating given for two main dimensions: quality of research and the readiness for dissemination. |

||

Appendix 2. Cost-Effectiveness and Cost-Benefit Analysis

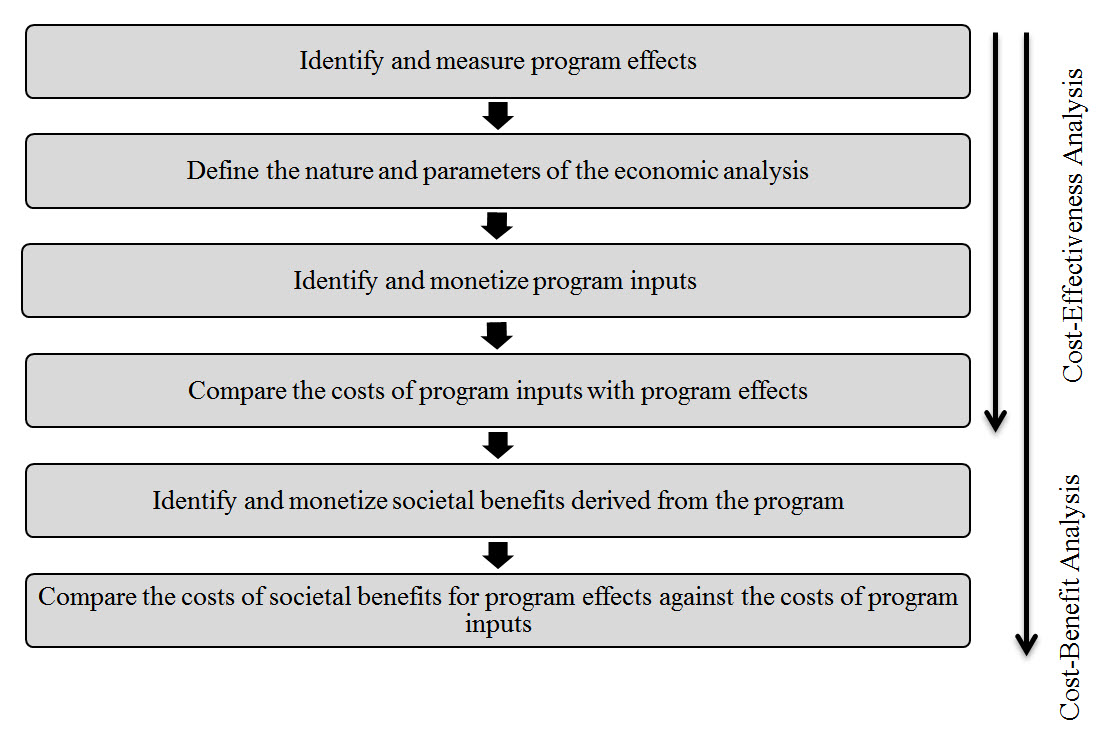

Although a number of approaches exist for evaluating programs in economic terms, two of the most commonly used techniques are cost-effectiveness analysis (CEA) and cost-benefit (or benefit-cost) analysis (CBA)Note 38. The main difference between the two is that CEA considers only the costs as they are expressed in monetary terms, while CBA goes one step further to quantify the societal benefits of the outcomeNote 39. In general, both approaches follow the process outlined below:

Figure 2. A Schematic of the Cost-Effectiveness and Cost-Benefit Analysis Process

Image Description

Figure 2, a top-to-bottom flowchart with six boxes, describes the standard procedures for conducting an economic analysis.

Step 1 (top box): Identify and measure program effects

Step 2 (second box from the top): Define the nature and parameters of the economic analysis

Step 3 (third box from the top): Identify and monetize program inputs

Step 4 (fourth box from the top): Compare the costs of program inputs with program effects

Step 5 (fifth box from the top): Identify and monetize societal benefits derives from the program

Step 6 (sixth box from the top): Compare the costs of societal benefits for program effects against the costs of program inputs

A cost-effectiveness analysis typically involves Steps 1 to 4 (top 4 boxes), whereas a benefit-cost analysis involves all six steps.

Cost-Effectiveness Analysis

A cost-effectiveness analysis (CEA) calculates the total costs of a program, then compares these costs to the program's impact or effectiveness, for example, change in the number of police arrests. The resulting cost-effectiveness ratio provides a cost per unit of program outcome, for example, $5,000 in program costs has to be invested to prevent one incident of police arrest.

Cost Effectiveness Ratio = Total Program Costs / Net Program Effectiveness

Cost-Benefit Analysis

In taking the economic analysis perspective a step further to assess the ultimate value of the program, a cost-benefit analysis (CBA) may be utilized. A CBA assesses, in financial terms, how much society saves from the impact of a program by attaching a monetary value to potential benefits, and then comparing this to the program costs. This equation results in a unit-independent benefit-cost ratio (BCR), for example, societal savings of $10 per $1 of program cost. The cost-benefit analysis is a tool that enables a comparison of the advantages and disadvantages of undertaking a particular program or policy as opposed to another course of action, including doing nothing at all, and applying monetary values to these advantages and disadvantagesNote 40.

Benefit-Cost Ratio = Total Societal Savings, Benefits or Averted Costs / Total Program Costs

A benefit-cost ratio greater than 1.0 means that the benefits of the program outweigh the costs, and thus, produce a positive “rate of return”. The higher the BCR, the greater the return on investment. Conversely, BCRs of less than 1.0 indicate that program costs surpass the benefits that are gained.

As an example of economic analysis, a study has recently been published on the monetary benefits and costs of the program Stop Now And Plan-Under 12 Outreach Project (SNAP-ORP), a cognitive–behavioural skills training and self-control program, in preventing later offending by boysNote 41. The total cost for an “average” boy participating in SNAP was $4,641 CDN (the total cost of the SNAP-ORP program was $1,729 CDN for low risk cases, $4,166 CDN for moderate risk cases, and $8,503 CDN for high risk cases). Results of this study indicate that based on convictions between $2.05 CDN and $3.75 CDN are saved for every $1 CDN spent on the program. Scaling up to undetected offenses, between $17.33 CDN and $31.77 CDN are saved for every $1 CDN spent on the program.

Appendix 3. Program Implementation

Practitioners and researchers in crime prevention increasingly face a common challenge: the successful, effective implementation of evidence-based practices. The emergence of this interest in implementation-related issues coincides with the realization that merely selecting an effective program is not enough and that, even with the development of best practice guides, various experiences in the field have shown that effective programs have not delivered the expected results. Merely selecting a model program is not a guarantee to achieving expected results. An effective program must be combined with a high quality implementation in order to increase the likelihood of achieving positive results among the clients servedNote 42.

To disseminate practical knowledge that can be used as guidelines, an implementation guide has been recently publishedNote 43. This guide provides checklists of activities related to implementation stages, which are useful for evaluating, planning and monitoring implementation activities across each stage of the process. As the goal here is not to repeat the information already presented in the guide, only the most important information that must be considered during the selection of a crime prevention program is presented in this report.

Implementation Stages

As demonstrated in Figure 3, research has shown that the implementation process is essentially composed of four stages. These stages do not follow a linear process, but instead they represent one that is dynamic and iterative. These stages are also interconnected and affected by various internal and external organizational factors, and challenges addressed (or not addressed) in one stage will impact the entire implementation process (for example, high staff turnover could require an organization to go back to a previous stage of implementation).

Figure 3. Implementation Stages

Image Description

Figure 3, an alternating flow chart, illustrates the stages of the program implementation process which contains four steps as follows:

Step 1. Exploration and adoption: Acquisition of information on evidence-based programs and identification of the most appropriate program

Step 2. Preparation and installation: Once the decision is made about the program selection, active preparation of the site beginning

Step 3. Initial implementation: Initial application of the program in the organization; this is the most difficult step

Step 4. Full implementation: The program is integrated in the community and in the organization's policies and procedures

Factors Influencing the Implementation Process

During the program selection process, it is important to consider a number of factors that may affect the implementation of the program. Having a comprehensive picture of the factors associated with the social context, the organization, the practitioners, and the program will allow for a better understanding of the nature of these factors and how they can positively (as facilitators) or negatively (as barriers) influence the implementation process. For example, in one study on the implementation of a mental health program in schools, Kam et al. (2003) observed a significant correlation between management's support for the program and the fidelity of the implementation by teachers. When these two factors were high, significant positive results were noted among the students; however, when management's support was weak, negative changes were seen among the students.

Main factors related to social context

|

Main factors related to the organizations

|

Main factors related to the practitioners

|

Main factors related to the program

|

* Factors with an asterisk (*) indicate that they are considered as the implementation drivers.

Implementation Drivers

Implementation driversNote 44 are defined as the elements that positively impact the success of a program. Their presence helps to increase the probability of success in replicating a program. Key components referring to capacities, the organization's infrastructure and operations can be classified into three categories:

- Implementation drivers related to competencies: These are mechanisms for implementing, maintaining and delivering an intervention as planned to benefit the program participants and the community. The implementation drivers in this category are:

- Staff selection

- Training

- Coaching

- Implementation drivers related to organizations: These are mechanisms for creating and maintaining favourable conditions in which the program will be developed and thus facilitate the delivery of effective services. The implementation drivers in this category are:

- Linkages with other external networks and partnerships

- Engagement and commitment from management

- Information management system in decision making

- Implementation drivers related to leadership: These are leadership strategies put in place to resolve various types of challenges. Many challenges relate to the integration of the new program, and leaders need to be able to adapt their leadership style (adaptive leadership), make decisions, provide advice, and support the organization's operations (technical leadership). The implementation drivers in this category are:

- Leadership (adaptive and technical)

Figure 4. Implementation Drivers (adapted from Fixsen & Blase, 2006)

Image Description

Figure 4, a block cycle chart with three boxes shows the different implementation drivers and their components in a circular flow.

Competency Drivers (top box): includes staff selection, training and coaching

Organization Drivers (bottom right box): includes partnerships and networks, engagement from management, and data driven decision making

Leadership Drivers (bottom left box): includes adaptive and technical

Appendix 4. Program Sustainability

Sustainability is a challenge not only for crime prevention programs, but for the vast majority of programs that serve children, youth and families. Program sustainability is a critical component affecting long-term change in behaviours, but many programs and services face numerous challenges. Many programs that demonstrate great results eventually fade away because they are unable to secure adequate resources to continue. Among the programs that do flourish, there are several common elements that contribute to their success.

For example, the Office of Adolescent Health (OAH)Note 45, through informal conversations with grantees, highlights several barriers to achieving sustainability, including securing funding; providing services to special populations; strategic planning and prioritizing sustainability planning; achieving local buy-in; creating and maintaining partnerships; and securing local political support. On the other hand, successes in these areas include being able to diversify funding streams; adapt services to special populations; use assessments to prioritize services; secure community supports; and enhance collaboration with local networks. These challenges and successes are in line with adolescent health programs but many similarities can be drawn with crime prevention programs.

Through extensive research and with input from OAH grantees, a sustainability framework and guide were developed. Table 4 presents eight sustainability factors that should be considered and the keys to success for each of them.

| Factors | Keys to Success |

|---|---|

Strategic planning |

|

Assessing the environment |

|

Adaptability |

|

Secure community support |

|

Integrate programs and services into local infrastructure |

|

Build a team of leaders |

|

Effective and strategic partnerships |

|

Secure diverse financial opportunities |

|

References

Andrews, D. A., & Bonta, J. (2010). The psychology of criminal conduct (5th ed.). New York, NY: Routledge.

Aos, S., et al. (2001). The comparative costs and benefits of programs to reduce crime (version 4.0). Olympia, WA: Washington State Institute for Public Policy.

Bania, M., & Kandalaft, A. (2013). Striving for sustainability: Six key strategies to guide your efforts. Ottawa, ON: YOUCAN Mentoring Program.

Baum, K., Blakeslee, M., Lloyd, J., & Petrosine, A. (2013). Violence prevention: Moving from evidence to implementation. Discussion paper. Washington, DC: National Academy of Sciences.

Blandford, A., & Osher, F. (2012). A checklist for implementing evidence-based practices and programs (EBPs) for justice-involved adults with behavioral health disorders. Delmar, NY: SAMHSA's GAINS Center for Behavioral Health and Justice Transformation.

Blase, K., & Fixen, D. (2013). ImpleMap: Exploring the implementation landscape. Chapel Hill, NC: National Implementation Research Network. Frank Porter Graham Child Development Institute, University of North Carolina Chapel Hill.

Blase, K., & Fixsen, D. (2013). Stages of implementation analysis: Where are we? Chapel Hill, NC: University of North Carolina at Chapel Hill.

Coalition for Evidence-Based Policy Working Group (2006). Which study designs can produce rigorous evidence of program effectiveness? A brief overview. Coalition of Evidence-Based Policy working paper. Available from: http://coalition4evidence.org/wp- content/uploads/2012/12/RCTs_first_then_match_c-g_studies-FINAL.pdf

Cohen, M. A. (2000). Measuring the costs and benefits of crime and justice. In G. LaFree (Ed.), Measurement and analysis of crime and justice. Washington, DC: National Institute of Justice, US Department of Justice.

Crosse, S., et al. (2011). Prevalence and implementation fidelity of research-based prevention programs in public schools - Final report. Prepared for US Department of Education, Office of Planning, Evaluation and Policy Development Policy and Program Studies Service. Rockville, MD: Westat.

Dhiri, S., & Brand, S. (1999). Analysis of costs and benefits: Guidance to evaluators. Crime Reduction Programme Guidance Note 1. London, UK: Home Office.

Durlak, J., & DuPre, E. (2008). Implementation matters: A review of research on the influence of implementation on program outcomes and the factors affecting implementation. American Journal of Community Psychology, 41, 327-350.

Evidence-Based Associates – Strengthening Families, Supporting Communities (no date). Evidence-based programs. Available from: http://www.evidencebasedassociates.com/the_difference/evidence-based_programs.html

Farrington, D. P., & Koegl, J. (2014). Monetary benefits and costs of the Stop Now And Plan program for boys aged 6–11, based on the prevention of later offending. Journal of Quantitative Criminology (online fist) DOI: 10.1007/s10940-014-9240-7.

Federal Provincial Territorial Working Group on Crime Prevention (2014a). Framing paper – Advancing evidence-based crime prevention and sustainability. Unpublished report. Ottawa, ON: Public Safety Canada.

Federal Provincial Territorial Working Group on Crime Prevention (2014b). Inventory of evidence-based crime prevention programs in Canada. Unpublished report. Ottawa, ON: Public Safety Canada.

Fixsen, D., & Blase, K. (2006). “What works” for implementing “what works” to achieve consumer benefits. Tampa, FL: National Implementation Research Network, University of South Florida, Louis de la Parte Florida Mental Health Institute.

Fixsen, D., et al. (2005). Implementation research: A synthesis of the literature. Tampa, FL: National Implementation Research Network, University of South Florida, Louis de la Parta Florida Mental Health Institute.

Fixsen, D., et al. (2009). Core implementation components. Research on Social Work Practice, 19(5), 531-540.

Fixsen, D., et al. (2013). Implementation drivers: Assessing best practices. Tampa, FL: National Implementation Research Network, University of South Florida, Louis de la Parte Florida Mental Health Institute.

Fratello, J., et al. (2013). Measuring success: A guide to becoming an evidence-based practice. Models for Change – Systems Reform in Juvenile Justice. New York, NY: Vera Institute of Justice.

Gabor, T. (2011). Evidence-based crime prevention programs: A literature review. Submitted to the Palm Beach County Board of County, Commissioners and Criminal Justice Commission.

Gray, M., et al. (2012). Implementing evidence-based practice: A review of the empirical research literature. Research on Social Work Practice, 23(2), 257-266.

Greenwood, P. W., & Welsh, B. C. (2012). Promoting evidence-based practice in delinquency prevention at the state level. Criminology & Public Policy, 11(3), 493-513.

Henggeler, S., & Schoenwald, S. (2011). Evidence-based interventions for juvenile offenders and juvenile justice policies that support them. Social Policy Report, 25(1), 1-28.

Institute for Educational Leadership (no date). Building sustainability in demonstration projects for children, youth and families. Toolkit Number 2 – Systems Improvement Training and Technical Assistance Project. Washington, DC: OJJDP.

Justice Research and Statistics Association (JRSA), Bureau of Justice Assistance, US Department of Justice (BJA), National Criminal Justice Association (NCJA) (2014). An introduction to evidence-based practices. Available from: http://www.jrsa.org/projects/ebp_briefing_paper_april2014.pdf

McIntosh, C., & Li, J. (2012). An introduction to economic analysis in crime prevention: The why, how & so what. Ottawa, ON: National Crime Prevention Centre, Public Safety Canada.

Metz, A. (2007). A 10-step guide to adopting and sustaining evidence-based practices in out-of-school time programs. Brief Research-to-Results, Child Trends, Part 2 in a Series on Fostering the Adoption of Evidence-Based Practices in Out-Of-School Time Programs. Washington, DC: Child Trends.

Metz, A., Blase, K., & Bowie, L. (2007). Implementing evidence-based practices: Six “drivers” of success. Brief Research-to-Results, Child Trends, Part 3 in a Series on Fostering the Adoption of Evidence-Based Practices in Out-Of-School Time Programs. Washington, DC: Child Trends.

Metz, A., Bowie, L., & Blase, K. (2007). Seven activities for enhancing the replicability of evidence-based practices. Brief Research-to-Results, Child Trends, Part 4 in a Series on Fostering the Adoption of Evidence-Based Practices in Out-Of-School Time Programs. Washington, DC: Child Trends.

Mihalic, S., et al. (2004a). Successful program implementation: Lessons from Blueprints. Juvenile Justice Bulletin. Washington, DC: US Department of Justice, Office of Justice Programs, Office of Juvenile Justice and Delinquency Prevention.

Mihalic, S., et al. (2004b). The importance of implementation fidelity. Emotional & Behavioral Disorders in Youth, 4(4), 83-105.

Morgan, A., et al. (no date). Effective crime prevention interventions for implementation by local government. Reports Research and Public Policy Series. Project no. 209. Canberra, Australia: Australian Institute of Criminology (AIC).

National Center for Chronic Disease Prevention and Health Promotion (Division of Adult and Community Health), Centers for Disease Control and Prevention (no date). A sustainability planning guide for healthy communities. Available from: http://www.cdc.gov/nccdphp/dch/programs/healthycommunitiesprogram/pdf/sustainability_guide.pdf

O'Connor, C., Small, S. A., & Cooney, S. M. (2007). Program fidelity and adaptation: Meeting local needs without compromising program effectiveness. What Works, Wisconsin – Research to Practice Series, 4. Madison, WI: University of Wisconsin–Madison/Extension.

Proctor, E., (2011, August). Implementation outcomes. Center for Mental Health Services Research Washington University in St. Louis. Presentation at the 2011 National Child Welfare Evaluation Summit. Washington, DC.

Proctor, E., et al. (2010). Outcomes for implementation research: Conceptual distinctions, measurement challenges, and research agenda. Administration of Policy Mental Health. 38, 65-76.

Promise Neighborhoods Institute (no date). Evidence-based practice: A primer for promise neighborhoods. Commissioned by the Promise Neighborhoods Institute at PolicyLink by Nilofer Ahsan at the Center for the Study of Social Policy.

Przybylski, R. (2008). What works. Effective recidivism reduction and risk-focused prevention programs. A compendium of evidence-based options for preventing new and persistent criminal behavior. Denver, CO: RKC Group.

Puddy, R. W., & Wilkins, N. (2011). Understanding evidence part 1: Best available research evidence. A guide to the continuum of evidence of effectiveness. Atlanta, GA: Centers for Disease Control and Prevention.

Rempel, M. (2014). Evidence-based strategies for working with offenders. Washington, DC: Center for Court Innovation, Bureau of Justice Assistance, US Department of Justice.

SAMHSA's National Registry of Evidence-based Programs and Practices (2012). A road map to implementing evidence-based programs. Available from: http://www.nrepp.samhsa.gov/courses/Implementations/NREPP_0101_0010.html

SAMHSA's National Registry of Evidence-Based Programs and Practices (no date). Questions to ask as you explore the possible use of an intervention. Available from: http://www.nrepp.samhsa.gov/pdfs/questions_to_ask_developers.pdf

Savignac, J., & Dunbar, L. (2014). Guide on the implementation of evidence-based programs: What do we know so far? Ottawa, ON: Public Safety Canada.

Sherman, L., et al. (1997). Preventing crime: What works, what doesn't, what's promising. A report to the United States Congress prepared for the National Institute of Justice.

Small, S. (2009). Evidence-informed program improvement: Using principles of effectiveness to enhance the quality and impact of youth and family programs. Presented at the Iowa State Webinar, University of Wisconsin-Madison/Extension. Madison, WI.

Small, S. A., et al. (2007). Guidelines for selecting an evidence‐based program: Balancing community needs, program quality, and organizational resources. What Works, Wisconsin – Research to Practice Series, 3. Madison, WI: University of Wisconsin–Madison/Extension.

Society for Prevention Research (no date). Standards of evidence - Criteria for efficacy, effectiveness and dissemination. Falls Church, VA: Author.

Stephenson, R., et al. (2014). Model program guide – Implementation guides: Background and user perspectives on implementing evidence-based programs. Development Services Group Inc. (DGS). Submitted to the Office of Juvenile Justice and Delinquency Prevention.

The Finance Project (2002). Sustaining comprehensive community initiative – Key elements for success. Finance Strategy Brief. Available from: http://www.ccitoolsforfeds.org/doc/Sustaining_CCIs_Key_Elements_to_Success.pdf

The Pew-MacArthur (2013). The Pew-MacArthur results first initiative, states' use of cost-benefit analysis: Improving results for taxpayers. Washington, DC: The Pew Charitable Trusts and MacArthur Foundation.

The Pew-MacArthur (2014). Evidence-based policymaking – A guide for effective government. Washington, DC: The Pew Charitable Trusts and MacArthur Foundation.

US Department of Health and Human Services, Office of Adolescent Health. (2014a). Building sustainable programs: The framework. Washington, DC: Child Trends.

US Department of Health and Human Services, Office of Adolescent Health. (2014b). Building sustainable programs: The resource guide. Washington, DC: Child Trends.

Vincent, G., Guy, L., & Grisso, T. (2012). Risk assessment in juvenile justice: A guidebook for implementation. National Youth Screening & Assessment Project. Washington, DC: MacArthur Foundation and Models for Change – Systems Reform in Juvenile Justice.

Wandersman, A., et al. (2008). Bridging the gap between prevention research and practice: The interactive systems framework for dissemination and implementation. American Journal of Community Psychology, 41, 171-181.

Welsh, B. (2007). Evidence-based crime prevention: Scientific basis, trends, results and implications for Canada. Ottawa, ON: National Crime Prevention Centre, Public Safety Canada.

Welsh, B. C., & Farrington, D. P. (2001). Monetary value of preventing crime. In B. Welsh, D. Farrington, & L. Sherman (Eds.), Costs and benefits of preventing crime. Boulder, CO: Westview Press.

Wiseman, S., et al. (2007). Getting to outcomes: 10 steps for achieving results-based accountability. RAND Health, supported by the Centers for Disease Control and Prevention.

Endnotes

- 1

Federal Provincial Territorial Working Group on Crime Prevention (2014a)

- 2

The National Institute of Justice's Crime Solutions website provides separate definitions for “program” and “practice”. Programs are a set of specific activities implemented in accordance with guidelines in order to achieve specific results. Practices are a general class of programs, strategies or procedures that share similar characteristics (http://www.crimesolutions.gov/default.aspx). In this report, “program” will be used as a generic expression that encompasses both definitions.

- 3

The Pew-MacArthur (2014)

- 4

For more information, see Coalition for Evidence-Based Policy Working Group (2006); Gabor (2011); Society for Prevention Research (no date); Welsh (2007).

- 5

Classification systems presented in Appendix 1 are all developed in the United States. In Canada, such a classification system for crime prevention programs does not exist. Due to this limitation, it should be acknowledged that a gap may exist between the literature and the existence of other crime prevention programs in local communities.

- 6

For more information about using and implementing risk assessment tools, see Vincent et al. (2012), Risk Assessment in Juvenile Justice: A Guidebook for Implementation. The primary purpose of this Guide is to provide a structure for juvenile probation striving to implement risk assessment or to improve their current risk assessment practices. Risk assessment in this Guide refers to the practice of using a structured tool that combines information about youth to classify them as being low, moderate or high risk for reoffending or continued delinquent activity, as well as identifying factors that might reduce that risk on an individual basis.

- 7

Henggeler & Schoenwald (2011); Rempel (2014); Small (2009)

- 8

The Centers for Disease Control and Prevention (CDC, US) has developed a tool The Continuum of Evidence of Effectiveness to facilitate a common understanding of what the best available research evidence means in the field of violence prevention. This Continuum also serves to provide a common language for researchers, practitioners, and policy-makers in discussing evidence-based decision making. For more information, see Puddy & Wilkins (2011).

- 9

Gabor (2011)

- 10

The Pew-MacArthur (2014)

- 11